Project background

Our IPCC research goal is to obtain the quickest time to trained model for Deep Learning (DL) approaches based on Convolutional Neural Network (CNN) architectures, on large Image Recognition datasets, using modern technologies from the Intel High Performance Computing (HPC) ecosystem like the Knights Landing (KNL) / Skylake CPU architectures and the OPA interconnect fabric. Presently, we are focusing on models optimized with Stochastic Gradient Descent (SGD), although we are also interested in porting and generalizing our techniques to other optimization methods, as well as older Intel architectures such as Haswell/Broadwell.

The first challenge in order to achieve this goal appears to be scaling efficiently to as many nodes as possible. In our view this means reaching a reasonable final convergence value (~74% for a Resnet-50 network on Imagenet1k), while sustaining a high hardware and scaling efficiency. This topic has been actively pursued by the DL community ever since G. Hinton’s rmsprop mini-batch training. Recent research [1][2][3][4][5] showcases a variety of scaling and optimization techniques with various degrees of success on several computer architectures. In terms of final accuracy all these approaches are comparable via the ImageNet (1k-22k) competition dataset (ILSVRC12, ILSVRC14). Additionally, there are new results from LBNL (Prabhat et. al.) and Intel (Pradeep Dubey, et. al.) using 9600 KNL nodes on a real-life, climate problem [5].

Initially, we focused on obtaining the quickest time to train for the GoogLeNet-v1 deep learning architecture on the ImageNet-1K dataset using the Intel optimized branch of Caffe [6]. Most of the experimentation was performed on Stampede from Texas Advanced Computing Center (TACC). This gave us valuable insights into the types of scaling problems we might encounter for architectures with more parameters and larger datasets (such as storage I/O, convergence, etc.). Perhaps the biggest problem was that GoogLeNet-v1 needs a local batch size of approximately 64-96 in order to achieve high hardware efficiency, and validation accuracy was severely degraded with a global batch size greater than 2048-4096. We used multiple options such as warm starts, aggressive learning rate schedules, increased network capacity, data augmentation, but we could not achieve reasonable accuracies for batches larger than 8K. This limited the total amount of nodes possible for training GoogLeNet-v1 to around 64. However, one of the interesting observations from these experiments was that in order to reach good convergence values for large global batches (that require for example larger learning rates), it is key that the network design includes batch normalization layers.

With this in mind, we switched our attention to the very popular residual network architectures, as these are designed to include batch normalization layers, and achieve higher validation accuracies than GoogLeNet-v1. Another advantage of these residual networks is that hardware efficiency is maintained also for smaller local batch sizes of around 16-32, allowing scaling out to even more nodes. Also, from a design standpoint due to the high regularity of the blocks composing the network, it is easy to experiment with wider and deeper variants with relative ease. Therefore, we also evaluated derivative network architectures (particularly wide networks) on larger and more challenging datasets such as Imagenet-22K [7] and Places-365 [8].

Distributed training proof of concept using Intel’s VLAB cluster

During the May/June 2017 period, we obtained access to Intel’s internal cluster, VLAB, composed of about 250 Xeon Phi 7250 KNL nodes, each with 192 GB RAM (HBM configured in flat mode) and 750GB of local SSD. Local SSD is crucial (and absent on other clusters) for training large deep learning networks on large datasets that don’t fit in RAM. We will go into more details in the following sections.

Our first task was to train Resnet-50 on the same, Imagenet-1K, dataset. Resnet-50 is about three times more computationally expensive as GoogLeNet-v1. After applying some of the techniques developed on Stampede, we managed to scale Resnet-50 to around 200 nodes by ISC2017 in June. However, those results showed lower validation accuracy than expected ( 3-4% decrease in top1 accuracy) when using large batch sizes of 6K-8K samples. We attribute this drop in accuracy to the fact that we have a large batch size that requires a large training rate, while at a worker level, if we are to ignore the data parallel context, the batch size is small. In our experiments we used a local (worker) batch size of at most 32 images. One of the ways to deal with this problem is using batch normalization [9]. This acts as a regularizer. There are some problems with this technique though: it introduces different parameters to normalize output during training and inference; it is sensitive to small mini-batches (we noticed this to be less than 16). Most notoriously, in a metric learning scenario, for a minibatch of size 32, if we randomly select 16 labels then choose 2 examples for each of these labels, the examples interact at every layer and may cause the model to overfit on the specific distribution of mini-batches and suffer when used on individual examples. The problem with using moving averages in training, is that it causes gradient optimization and normalization in opposite direction and leads to the model blowing up. A possible solution might be the use of batch renormalization [10] and we will explore this approach in future work.

The batch normalization implementation of Intel Caffe has a “moving average fraction” parameter and through empirically tuning this parameter, including a warm-up phase and additional data augmentation, we managed to avoid overfitting and achieved state-of-the-art accuracies even for global batch sizes greater than 10K, and even going to batch sizes of 25K with relatively minor losses in accuracy.

Additionally, we have experimented with Wide Residual Networks of various sizes, as these achieve higher accuracy, and can leverage this large-node-count scenario by having a high parameter count. Also, we have noticed that it is much more effective to use polynomial decay for the learning rate schedule (same as in the warm up phase), instead of the classic 3-step 10-fold decrease of the learning rate that is used in many of the research papers. This actually allows us to train residual networks with fewer epochs, and still achieve high, close to state-of-the-art (SOTA) validation accuracies. Some example accuracies we have obtained for Imagenet-1K: 73.2% top-1 in 29 epochs, 74% in 37 epochs, etc. Being able to algorithmically decrease the amount of work (number of training iterations) needed to train deep neural networks is clearly an avenue that we will follow in future work.

Resnet-50 on Imagenet-1K using production-grade supercomputers (Stampede2/MareNostrum 4)

After experimenting with these very powerful techniques on VLAB research cluster, we decided to port them to production HPC systems, and started using the Stampede2 system at TACC for this purpose. Stampede2 features 4200 Xeon Phi 7250 nodes connected with Intel OPA fabric, but each node has only 96GB RAM (HBM configured in cache mode). Since we were concerned with Imagenet-1K, that is a 42GB compressed LMDB dataset, we could safely copy the dataset to RAM at the beginning of the job, this clearly improving execution efficiency. We note that this might be a problem for larger datasets, and will discuss this further when presenting Imagenet-22K and Places-365 experiments. However, all experiments in this section are using Resnet-50 and Imagenet-1K.

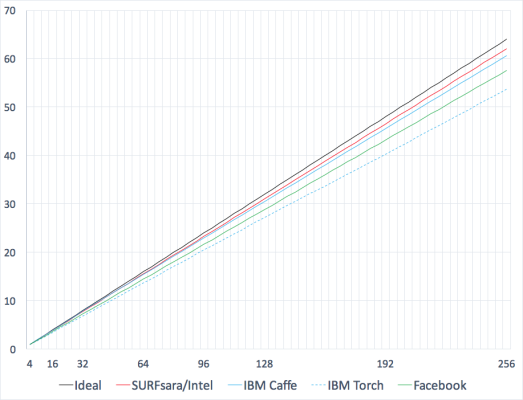

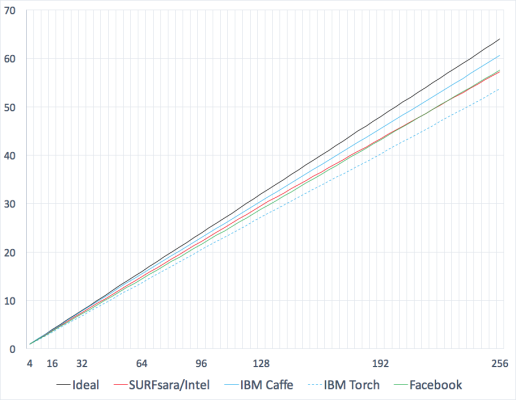

We have replicated the 256 worker experiments of Facebook [3] and IBM [2], and achieved 73.2% top-1 accuracy in 41 minutes, and 74% top-1 in about 50 minutes. Of course, one has to keep in mind that the KNL devices have half the peak performance of NVIDIA P100 GPU that was used by both Facebook and IBM. Moreover, we achieve above 97% scaling efficiency when going from 1 to 256 nodes. This can be visualized in Figure 1, where we also compare our speed-up ratios with the ones from Facebook and IBM.

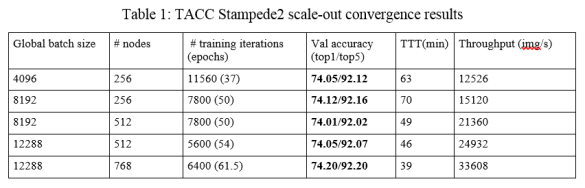

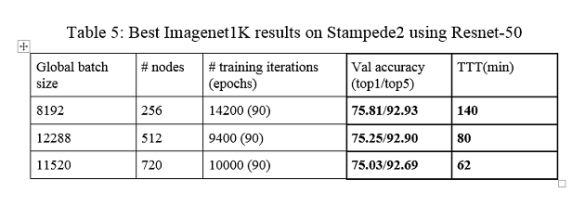

But then we decided to go even further and see how far we can get with scaling Resnet-50. We have thus attempted 512 Xeon Phi node and 768 Xeon Phi node runs with a batch size of only 16 per node. This led to a global batch size of 12288 for the 768-node case. We achieve an accuracy level of 74.05/92.07 top-1/top-5 for the 512-node case within 46 minutes, and 74.20/92.20 for the 768-node case in only 39 minutes. You can find more details in the experimental results tables below.

Note that all our models are evaluated against the ILSVRC2012 validation data, containing the blacklisted images that were removed in 2014. By using the ILSVRC2014 validation data, we consistently increase the top-1 validation accuracy by 0.3%-0.4%, thus all models trained for at least 45-50 epochs reach an accuracy of above 74.3% top-1 accuracy on the ILSVRC2014 validation set. Results using the above methodology and obtained on TACC’s Stampede are outlined in Table 1. We note that we’ve applied Facebook’s methodology for linearly scaling the learning rate for the experiments presented below, unless otherwise noted.

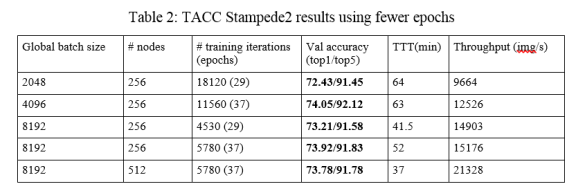

Moreover, as indicated in the previous section, we use some aggressive learning rate decay policies that allow us to decrease the number of training iterations, at the expense of minor accuracy losses. These experimental results are contained in Table 2.

Perhaps an interesting observation from the experimental results obtained on Stampede2 is that for the case of 256-node runs using a smaller local batch size of 16 (row 1 from Table 1 and row 2 from Table 2), one can achieve good validation accuracy using a relatively limited number of epochs (37). This result is very close to the Facebook/IBM ones, in terms of time-to-train, achieving convergence at above 74% top-1 accuracy in just 63 minutes. Some other interesting examples are the 256-node one with a local batch size of 32, that reaches accuracy in 52 minutes, as well as the 512-node run with a local minibatch of 16, that reaches slightly lower accuracy, albeit in 37 minutes.

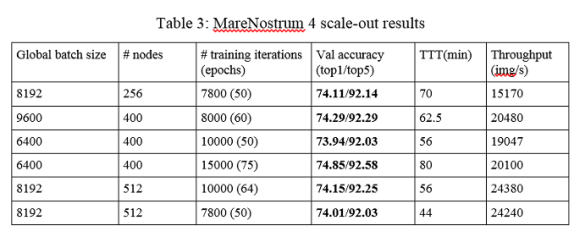

In parallel with performing the above experiments on Stampede2, we have also performed similar Imagenet1K experiments on Intel Xeon Skylake nodes on the MareNostrum 4 supercomputer from the Barcelona Supercomputing Center (BSC). MareNostrum 4 is composed of around 4000 2S Intel® Xeon® Processor 8160 (SKX), nodes, each containing 96GB RAM and around 200GB of local storage. As it was planned for our IPCC, we wanted to specifically evaluate deep learning training on traditional Xeon processors, as these form the backbone of most computing centers around the world. Thus, we chose the versatile Skylake architecture for this purpose.

The results were quite successful, leading to around 90% scaling efficiency when going from 1 to 256 nodes, as can be seen in Figure 2. Larger runs (256+ nodes) together with their validation accuracies and achieved time-to-train are presented in Table 3. As in the Stampede2’s case, all our trained models achieve a top-1 validation accuracy greater than 74% on ILSVRC2012 validation set, and 74.3% on ILSVRC2014 respectively.

Some of the highlights of our results are:

- convergence in 70 minutes using 256 SKX nodes

- convergence in 56 minutes using 400 SKX nodes

- convergence in 44 minutes using 512 SKX nodes.

We note that the peak performance of the hardware used by Facebook and IBM in their experiments is more than double compared to 256 nodes of SKX, and thus our results show higher hardware efficiency.

Exploring Resnet-50 SOTA accuracy on the Imagenet-1K

Since we have had access to such a valuable resource (Intel’s VLAB cluster), we decided to perform a series of experiments that aim to match or improve the state of the art for several large datasets. However, since the VLAB cluster is configured in flat mode, compared with Stampede2’s cache mode, we decided to use VLAB more for our research runs that are mostly concerned with increasing the validation accuracy, and present timing results only from the Stampede2 and MareNostrum 4 production infrastructures.

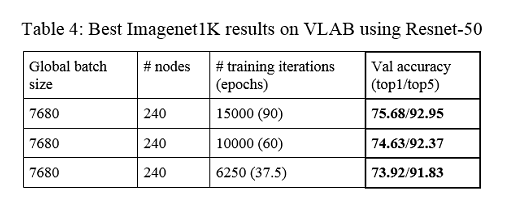

Table 4 below presents the SOTA validation accuracy results obtained on Imagenet-1K dataset using single-crop evaluation of Resnet-50 models on VLAB. We train the exact same model, with the same training strategy; the only parameter changed being the number of epochs to train. Training the model for the full 90 epochs leads to a top-1 validation error on the full ILSVRC2012 validation set of 24.32%. What is quite exciting is that we can achieve 26.6% error, using only a third of the training iterations, as explained in the previous section, although regular practice when increasing the batch size was to increase the number of training iterations.

To see the actual time-to-SOTA-accuracy, we have replicated the SOTA convergence results also on the Stampede2 production system, with various configurations (batch size, number of nodes). Albeit the runtime is longer due to the actual 90-epoch run training budget, we show that our training architecture is clearly capable to deliver SOTA accuracies. The timing, as well as top1/top5 accuracy results are outlined in Table 5 below. We note that our 75.81% accuracy is actually higher than the 75.3% obtained by the Resnet authors (although we use a much higher batch size).

Exploring Wide Residual Networks on the Imagenet-1K

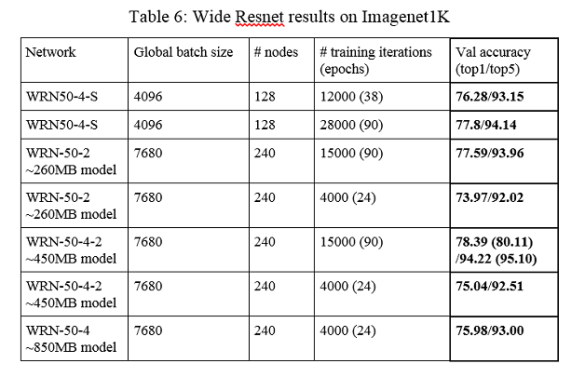

These types of runs motivated us to explore this interesting property that allows us to decrease the number of training epochs in more detail. We have thus decided to evaluate Wide Residual Networks (WRN), as those offer increased capacity at a low computational cost (compared to their counterparts with extra depth). They are interesting for us because they have the potential of requiring less number of epochs to capture additional properties from the inputs, despite having more parameters than their narrower counterparts. Table 6 presents our results on these larger network models. These experiments have led to very good accuracy trained models, and this is particularly interesting considering that all of them are trained on quite large batches.

What is striking here is that one can obtain better accuracy after 24 epochs with a wider network (last row from Table 6), than after a full 90 epochs run with a ResNet-50 (see the second row of Table 4). Another highlight here is the full WRN-50-4-2 run. This run in particular exhibited state-of-the-art accuracy and thus we have decided to evaluate it in a simple 12-crop setting, leading to above 80%/95% top-1/top-5 accuracy (the numbers in parentheses from Table 6) . We expect the larger WRN-50-4 to outperform these results, but we did not run it yet to completion. Besides the improved accuracy of these wider networks, they have another property that was not fully explored yet in the present study. They are more computationally heavy, however they are more amenable to distributed computing, as more computation leads to better hardware efficiency even for local batches of 4-8 images, when compared to the traditional Resnet-50. This should allow us to scale even further (perhaps 2048 nodes) using this fully synchronous SGD approach. As an indication for the time-per-iteration for these wide networks, the WRN50-4-S is only about 1.8-2x more computationally heavy than Resnet-50, but achieves a top-1 validation accuracy with more than 2% higher in the same number of epochs.

Large dataset state-of-the-art results using Wide Residual Networks Imagenet-22K/Places-365

Since we determined that these Wide Residual Networks have very good convergence and scalability properties, we wanted to see if this technique holds for larger (Places-365) and less regular datasets (Imagenet-22K).

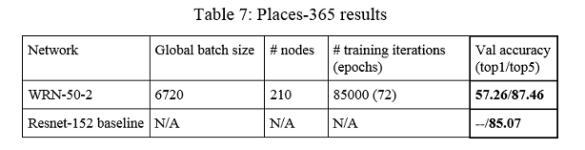

Places-365 is a scene classification dataset released by MIT, and it features around 8 million training images split across 365 scene categories. However, they are not evenly split, as is the case for Imagenet-1K. For validation, a standard 36500 image set is used, with 100 images per scene category. Since Places-365 is around 7 times larger than Imagenet-1K, the size of the compressed LMDB is around 206GB. This is too large to fit in RAM, so we are storing it locally on each node of the VLAB infrastructure. By using a wide residual network trained from scratch for 72 epochs, we have managed to achieve a top-5 error rate of 0.125 using a simple 12-crop evaluation, leading to one of the best single-model results from the competition leaderboard. As a comparison, the baseline Resnet-152 reaches a much higher 0.149 top-5 error. The results are presented in Table 7 below.

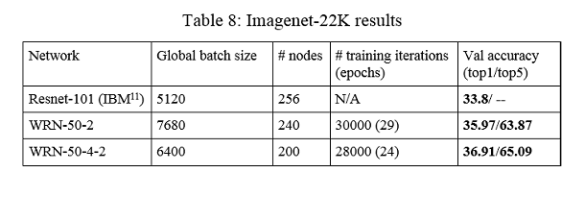

On the other hand, Imagenet-22K has many more categories (21841), and some classes are actually very poorly represented. It recently came in the media attention when IBM has published a very good result, surpassing the previous record of Microsoft. For Imagenet-22K we follow the standard practice (as described by IBM), and split the 14.2 million images into a 7.5 million training set and a 2 million testing set. This is also much bigger than Imagenet-1K, with a compressed LMDB reaching 225GB. The same as for Places-365, the dataset is copied locally on each node from the VLAB infrastructure, as it is too big to fit in RAM. Although IBM has surpassed Microsoft record by around 4% top-1 accuracy, we have achieved a similar improvement comparing to IBM’s result. We have trained two wider networks for around 24-30 epochs, using relatively large batch size (6400-7680). The widest network reaches a record top-1 accuracy of 36.9% in only 24 epochs, while the thinner one reaches an accuracy of 36% (still much better than IBM’s result) in around 30 epochs. Both training runs were performed on the VLAB cluster using 200 and 240 nodes respectively. As also noted by IBM, training a classifier for this dataset requires a very large output layer, as this should contain neurons for all 21841 categories of Imagenet-22K. However, this does not decrease our throughput noticeably, as the IntelCaffe implementation based on Intel ML-SL hides communication from computation, and while also the wider convolutional layer computation is heavier on these wider networks, the communication is mostly hidden also for this large layer. The experimental results for Imagenet-22K are presented in Table 8.

Conclusion and future work

To conclude, using a combination of techniques (large global batch sizes, modified batch normalization, aggressive learning rate schedules, warm-up strategies, wide network topology architectures, etc.), we have achieved a scalable solution based on Intel’s distribution of Caffe and Intel ML-SL [11] (all running on top of Intel hardware) that provides state-of-the-art neural network training both in terms of time to trained model and in terms of the achieved accuracy. Moreover, our techniques allow us to perform various trade-off between the training time and the accuracy of the resulting model.

We have managed to obtain an 80%+/95%+ top-1/top-5 model for Imagenet1K, an impressive 87.5% top-5 accuracy on the Places-365 dataset, but perhaps the most notable result is the 36.91% top-1 model accuracy on Imagenet-22K using Wide Residual Networks. Both the Places-365 and Imagenet-22K models are establishing new single-model state-of-the-art, surpassing both Microsoft and IBM’s results by a large margin, using only general-purpose CPU-based hardware, as opposed to special accelerators.

Our research work is currently in progress on improving strategies, by regularly using multi-crop evaluations, as even the limited multi-crop evaluation that we’ve performed so far gives major advantages. We are also looking at large ensembles, both for testing, as well as for developing novel distribution techniques during training. Also, at the moment the evaluation is done on a single node, and this becomes the limiting factor when evaluating large ensembles, therefore we plan to extend the evaluation phase such that it makes efficient use of multiple nodes.

As additional future work we are planning to investigate more effective training strategies. Based on our own empirical results and inspired by research such as the Cyclic learning rate [12], Shake Shake regularization [13], SGDR [14], we believe that as far as SGD based methods are concerned, there is a correlation between a measure of regularity of a dataset, learning rate policy, maximum global batch size and number of workers. We plan to investigate a way to automatically learn this relation. More than this, we want to analyze the relationship between width and depth of convolutional networks, total number of inputs, total number of classes, and number of examples per class.

References

[1] Dean, Jeffrey, et al. “Large scale distributed deep networks.” Advances in neural information processing systems. 2012.

[2] Chilimbi, Trishul M., et al. “Project Adam: Building an Efficient and Scalable Deep Learning Training System.” OSDI. Vol. 14. 2014.

[3] Goyal, Priya, et al. “Accurate, Large Minibatch SGD: Training ImageNet in 1 Hour.” arXiv preprint arXiv:1706.02677 (2017).

[4] Cho, Minsik, et al. “PowerAI DDL.” arXiv preprint arXiv:1708.02188 (2017).

[5] Kurth, Thorsten, et al. “Deep Learning at 15PF: Supervised and Semi-Supervised Classification for Scientific Data.” arXiv preprint arXiv:1708.05256(2017).

[6] https://github.com/intel/caffe

[7] http://image-net.org/

[8] http://places2.csail.mit.edu/results2016.html

[9] Ioffe, Sergey, and Christian Szegedy. “Batch normalization: Accelerating deep network training by reducing internal covariate shift.” International Conference on Machine Learning. 2015.

[10] Ioffe, Sergey. “Batch Renormalization: Towards Reducing Minibatch Dependence in Batch-Normalized Models.” arXiv preprint arXiv:1702.03275 (2017).

[11] https://github.com/01org/MLSL

[12] Smith, Leslie N., and Nicholay Topin. “Exploring loss function topology with cyclical learning rates.” arXiv preprint arXiv:1702.04283 (2017).

[13] Gastaldi, Xavier. “Shake-Shake regularization.” arXiv preprint arXiv:1705.07485 (2017).

[14] Loshchilov, Ilya, and Frank Hutter. “SGDR: stochastic gradient descent with restarts.” arXiv preprint arXiv:1608.03983 (2016).

0 Praat mee