Rapid rise in power consumption

Sustainability is a high priority for SURF. We have launched several initiatives to increase understanding and raise awareness of sustainable enterprise and green ICT. Power usage plays a key role in this. The explosive worldwide growth in the numbers of data centres and servers is causing a rapid rise in power consumption. According to a 2016 report, consultancy firm CE Delft estimates that the power consumption by data centres will rise by 80% between 2013 and 2020. The Netherlands is a popular location for data centres.

The same study also found, however, that on balance, consumption in the ICT sector is expected to drop by 10 to 20% due in particular to cloud-based applications and efficiency improvements. These estimates are confirmed by the case study described in this article.

SURFdrive is a SURF service that offers cloud storage to education and research institutions. It is also the SURF service with by far the fastest growing number of users: this cloud storage is currently being used by 16,000 lecturers and students. SURFdrive now has 60 servers. In my study, I used several of those servers to test the potential benefits of using Power Management in a production environment.

Incredibly hot processor

Power management has various definitions, but is understood here to mean the features of computer components intended to save electricity. The features offered by the different server manufacturers, such as Dell or HP, can vary. Companies that produce separate components also aim for energy savings, like Intel and AMD do for their processors. A processor (also called a CPU) is responsible for performing the computer's calculations. It consumes a large portion of the power required to run a server, in spite of the fact that it is often only in use 10% of the time.

So it only rarely needs to run at full capacity. Anyone who has ever held their hand near a running processor knows how incredibly hot they can get. Energy is lost through this process of heat dissipation. Unfortunately, it is impossible for all energy to be fully utilized. So what can you do to conserve more of this energy?

Automatically lower clock speed

Give your processor a break! When it comes to processors, speed can be a confusing concept. However, one way to speed up a processor is to increase the clock speed. The clock speed indicates the frequency at which the processor performs a task; a modern processor of 3 GHz performs 3 billion computations per second. However, the higher the clock speed, the hotter the processor becomes, resulting in more lost energy. If a processor is not being fully utilised, keeping the clock speed high seems like a waste if the processor could function equally well at a lower speed.

And so it is. A lower clock speed reduces energy consumption and increases processor utilisation. But what if the processor does need to be fully utilised? Then it would be counter-productive to lower the clock speed, because that would slow down your processor. That is why Intel and AMD processors are equipped with demand-based switching, also referred to as dynamic frequency/voltage scaling. This enables the processor to automatically lower the clock speed in times of limited utilisation and to raise it again as utilisation increases. This conserves electricity, without affecting performance.

It is surprising that this – by no means new – technique is still used so infrequently by manufacturers and users. Though Dell has included it in many of its latest generation servers, many other manufacturers have not. Although it can in principle be used in any server with an Intel or AMD processor (i.e. all of them), it is not always very accessible. The smaller data centres, in particular, of which the Netherlands has so many, are still not using it much. Giants such as Google, where power consumption plays a larger role in the total cost of ownership than the hardware purchase price, are applying it much more frequently.

Results

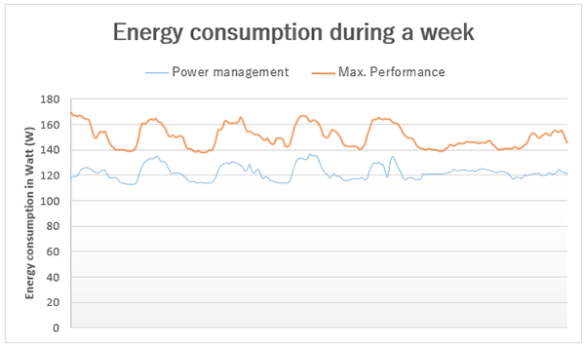

At SURFdrive, servers converted for power management use approximately 20% less electricity. When the clock speed goes down, processor utilisation goes up: the average rose from 10% to 20%. Peak utilisation increased from 20% to 30%, still accounting for just a modest share of maximum capacity.

To illustrate, these results might be compared to savings on costs or CO2 emissions. Note that these figures are only estimates intended to provide an idea of the amounts. Based on the CE Delft report, data centres consume a total of 1.36 TWh per year. Assuming a price of €0.10 per KWh, reducing power consumption by 20% would save €1.36 million per year. In terms of CO2 emissions, this is equal to the average emissions of over 20,000 Dutch households.

About the author

Diederik de Graaf studies System and Network Engineering at the Amsterdam University of Applied Sciences. He previously designed a visualisation platform that monitors energy consumption for the Greening The Cloud project together with a team of fellow students. He conducted the above study within the framework of his internship at SURFsara.

0 Praat mee