Quick recap: what is the ORI DuckLake?

The ORI DuckLake is a publicly accessible, serverless data catalog for open research information about Dutch institutions. Instead of locking data away in a proprietary database that requires a running server and credentials, we store everything as Parquet files on SURF Object Store. Anyone with DuckDB — or a tool that wraps it — can query the data directly, without spinning up infrastructure, without a licence fee, and without asking permission.

Why does this matter? Because Open Research Information should be genuinely open: not just open in principle, but open in practice — reachable by a researcher with a laptop, a data steward writing a dashboard, or a national monitoring service aggregating metrics across institutions.

The design philosophy we laid out in our earlier post holds: no always-on database servers, reproducible pipelines, FAIR by default, and a clear path toward a national ORI analytics backbone that institutions can plug into without losing data ownership or sovereignty.

What's in the lake now?

We have populated the ORI DuckLake — codenamed Sprouts — with real data across four schemas:

| Schema | What it contains |

|---|---|

| openalex | Works, authors, institutions, sources, topics, funders — global coverage, filtered for NL institutions |

| openaire | Publications, organisations, projects, datasets, software from the European open access infrastructure |

| cris | Publications from Dutch institutional repositories (CRIS exports) |

| openapc | Article processing charges paid by Dutch institutions |

All four data sources are now live and SQL-queryable from a single catalog endpoint — no credentials required:

https://objectstore.surf.nl/cea01a7216d64348b7e51e5f3fc1901d:sprouts/catalog.ducklakeThis means you can, right now, join OpenAlex publication records with CRIS repository exports and check which DOIs appear in one source but not the other. You can look up the ROR coverage of Dutch institutions across OpenAIRE. You can correlate APC costs with open access status. These are exactly the kinds of questions the PID to Portal project is designed to answer.

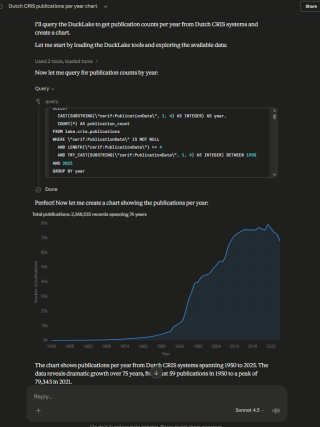

What's new: ORI Agentic Tools

Knowing the data is there is one thing. Actually querying it is another. DuckDB SQL is powerful, but not everyone wants to write UNNEST(authorships) joins to answer "how many Dutch publications have an ORCID on the first author?"

That's where the new surf-ori/agentic-tools repository comes in. It is an initial release of a set of tools that connect AI agents — specifically Claude — directly to the ORI DuckLake, so that you can ask research data questions in natural language and get real answers back from the actual data.

The repository contains two complementary pieces:

Skills — these teach an AI agent how to think about the ORI data: which tables exist, how identifiers like DOI, ORCID, and ROR are structured across different sources, how to unnest nested columns, and which query patterns work well. Skills are lightweight prompt files that load only when needed, keeping token cost low.

An MCP server (ori-ducklake-mcp) — this gives the agent a live, read-only SQL connection to the DuckLake at runtime. When the agent needs to answer a question, it doesn't guess: it runs a real query against the real data.

Together, the skill tells the agent what to do, and the MCP server gives it the tools to actually do it.

Try it yourself

If you use Claude Desktop, add the MCP server to your config:

json

{"mcpServers":

{"ori-ducklake-sprouts":

{"command": "python",

"args": ["-m", "ori_ducklake_mcp"],

"env":

{"DUCKLAKE_URL":

"https://objectstore.surf.nl/cea01a7216d64348b7e51e5f3fc1901d:sprouts/catalog.ducklake"

}

}

}

}If you use Claude Code, install the skill alongside the MCP server:

bash

npx skills add surf-ori/agentic-tools@ori-ducklakeThen simply ask questions like:

- "How many Dutch publications in OpenAlex have a ROR affiliation?"

- "Which institutions have the most CRIS publications without a DOI?"

- "Show me the OA status distribution across OpenAIRE publications from Dutch universities."

The agent will figure out the right tables, write the SQL, query the lake, and return the answer — with the query visible so you can learn, adapt, or reuse it.

Why agentic access matters for ORI monitoring

The PID to Portal project is fundamentally about making Open Research Information actionable: visible, measurable, and improvable. Building monitoring dashboards is one path to that. But dashboards need to be designed upfront, and they answer the questions you already thought to ask.

Agentic access to the data lake opens a different mode of work: exploratory, conversational analysis. A data steward can ask "why does this institution show lower DOI coverage?" and follow the thread — without waiting for a developer to add a new chart. A researcher can cross-reference sources on the fly. A project manager can get a quick sanity check on a metric without opening a notebook.

This does not replace structured dashboards — it complements them. Think of it as the difference between a report and a conversation with the data.

This is an early release — contributions welcome

agentic-tools is explicitly a work in progress. The initial release covers the core DuckLake skill, the OAI-PMH harvesting patterns for Dutch repositories (openaire-oaipmh), and URN:NBN resolution via the Nationale Resolver (urn-nbn). More is coming: broader schema documentation, richer query patterns, support for additional data sources, and tighter integration with the monitoring workflows being developed in the project.

Pull requests are very welcome. If you work with ORI data and have useful query patterns, identifier crosswalk tricks, or schema knowledge to contribute, the repository is open and licensed under EUPL-1.2. We'd love for this to grow into a community resource — not just a SURF internal tool.

What's next

The filled DuckLake and the agentic tools are a foundation. The next step in the PID to Portal project is building the actual ORI monitoring dashboard on top of this data — interactive, reproducible, and open — using Marimo. That work is now actively underway, and the agentic tools will play a role in the design and prototyping process itself.

We'll keep sharing progress here. In the meantime, the lake is open, the tools are public, and the data is waiting.

→ GitHub: surf-ori/agentic-tools

→ Earlier post: Behind the scenes: experimenting with a next-gen ORI data infrastructure

0 Praat mee