Alan Berg

I am an author and consult about Analytics, IT automation, Quality… Meer over Alan Berg

As we create tools for Education, Learning Analytics Research projects and AI models are points of reflection.

In this blog, I deliver a hint for what is possible with LA (Learning Analytics) tooling and AI modeling. I hope this motivates those who are developing LA services to first review.

Who said college essays are dead? Recent rapid evolution in capabilities of AI models is astounding. Consider what nature has just stated about the GPT3 and chatGPT models. We are on the verge of having a notable change in educational design. Design which will optimize learning and defend against modes of cheating. If we lucky this will improve our practices as AI incrementally becomes a supportive co-pilot for specific tasks. Co-pilots which allow us to concentrate on the arduous work of delivery and taking away the more trivial. If we are unlucky, we will have a scatological evolution full of hype, sales talk, and buggy beta versions.

Research in the field of Learning Analytics has been active for around a decade. The research has led to many pilots and hundreds if not thousands of dashboards. However, the requirements for research are different from for scalable production environments. At the highest scales we have the dashboards that are available as part of Learning Management systems such as Canvas, Brightspace, Blackboard, Moodle. We have examples of largescale collaboration on algorithms such as the Par framework and worthy examples such as onTask.

The tools that live in the cloud have the advantage of quick incremental updates. Tools such as onTask control of your own data and an open community vision on how to collaborate to a better good. Of course, LMS (Learning Management System) providers have their own largescale communities, but in the end, they are responsible to their shareholders for delivering profit.

As a thought exercise, I reviewed the proceedings from Global conference for Learning Analytics for useful examples and GitHub and AI repositories. In the next section I deliver a taste for what is possible with LA (Learning Analytics) tooling and motivate those who are looking at developing LA services to first review what has already been achieved.

AI models are becoming more embedded in LA interventions. In this section I briefly explore LA tools and then AI models.

As mentioned, onTask is a tool to send tailored emails depending on a set of criteria:

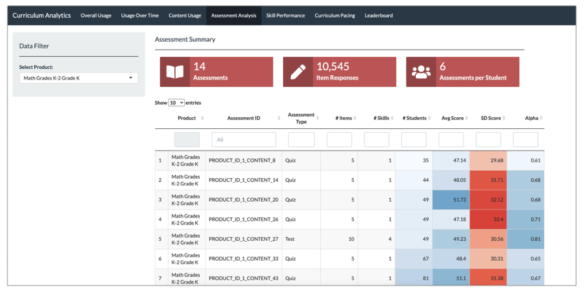

You can find example dashboard code in R in EdOptimize GitHub location. The feature set includes Platform analytics, curriculum analytics and implementation analytics

The line between Academic analytics and Learning Analytics is blurred for this project. The blurred lines are common in practice. Another point to note is the popularity of no code tools such as PowerBI. Many researchers find Python and R more expressive for dashboard building. The code also has the advantage of being in a text format which is easier to version and update within GitHub. The disadvantage is obvious you need to have and retain the coding skills within your team(s).

An enhancement to Canvas from the University of Michigan (UMICH) is my learning analytics. You can find the GitHub location here I have not tried to get the dashboard running. However, UMICH are advanced in their Analytics deployments and are worth tracking.

The views within the dashboard are:

Resources Accessed: Students can see which files, videos, and other resources are popular in the course and accessed most often by classmates. Resources are color-coded in blue if the student has accessed them or grey if they have not. File names have active links to immediately open resources they have missed.

Assignment Planning: Students can view their progress and upcoming assignments with a Grade Progress bar and Assignments Due by Date list. Students can also set course and assignment goals to help with their course planning and engagement.

Grade Distribution: This visualization provides a bar chart that shows the distribution of grades in the class along with the number of students in the class and the average and median grades. This visualization has been designed to protect student privacy by binning low outlier grades.

The dashboard encapsulates years of experience from an American Institution. Choosing another organisations dashboard for comparison provides you a giant's shoulders to stand on.

Is the future here now? Probably not. However, for the current state of the art have a look at chatgpt at OpenAI.

AI models are increasingly embedded in standard applications such as Microsoft office, for example to check grammar or soon to generate images from text prompts. The same will be true for LMS’s and other educationally orientated products. Therefore, consider experimenting with models such as chatGPT which according to OpenAI: “The dialogue format makes it possible for ChatGPT to answer follow-up questions, admit its mistakes, challenge incorrect premises, and reject inappropriate requests.” The reason you would want to experiment is garbage in makes for biased and unacceptable output. Despite the relative ease of coding, before deploying you would really need to be certain that the AI prompts from user input does not lead to embarrassment.

For those wishing to play with AI and start to mock up LA like intervention services the transformational models in Hugging face repositories are at times trivial to deploy and do not necessarily require you to use your own data. For example, in Python to generate text based on a prompt you can run these lines of code:

from transformers import pipeline

generator = pipeline("text-generation", model="distilgpt2")

generator( "Rubbish in is rubbish out", max_length=60, num_return_sequences=1, )

Output:

"Rubbish in is rubbish out there, but hey, you're gonna go. Go to your store and get a bag of stuff, it'll look good. I can't get your own food, but the only way to get a bag of stuff is to pick up what you find and move"

Now the coding is trivial and the output interesting as in rubbish.

There have been numerous articles in the technical press pointing to this lack of quality. However, if you defend the input (known as prompt engineering), improve the models' architecture and data processing pipelines you can get much nearer to the future. For example, in an educational context you might want to generate multiple choice questions for your class. The zero training models with the right prompt engineering can now deliver to the lecturer content. The lecturer will need to sift through the content and filter, but this work is less than before the AI was involved. This highlights a crucial point. For any type of AI intervention that significantly impacts students make sure there is a human in the loop.

For python Developers building such an app consider reading: Transformers for Natural Language Processing

With improved models such as GPT 3 it is only a question of time before the unscrupulous start to deliver large chunks of their assignments through these means. By writing the models and experimenting you will get a sense of how near this type of cheating is to reality. The positive side is that text generation or text question answer generation are valid methods. Given the velocity of current developments you may be able to later to use text generation as part of an automated avoidance strategy when spotting misconceptions during learning.

Due to rapid evolution and improving validity Transformative models such as GPT are going to shape educational product development. It is good to know what is currently possible. Especially if it does not require using your data or more than a few lines of code.

I encourage those developing new Learning Analytics services to look at what has already been achieved. This approach will provide you with material that can be commented during co-development. Playing with transformative AI models is straight forward, at least to start. For new services, consider designing in AI as the co-pilot and place humans in the loop.

I am an author and consult about Analytics, IT automation, Quality… Meer over Alan Berg

0 Praat mee