Virtual Reality (VR) will be familiar to most people: the use of display and interaction technology, usually a head-worn device with handheld controllers, with which you are fully immersed in a computer-generated world. But the term Extended Reality (XR) might not be as well-known. XR is an umbrella term used to encompass the complete spectrum of technologies and human-computer interactions that range from fully virtual environments (VR) to environments that combine virtual elements with a view of the real world. The latter form is usually referred to as Augmented Reality (AR) or Mixed Reality (MR).

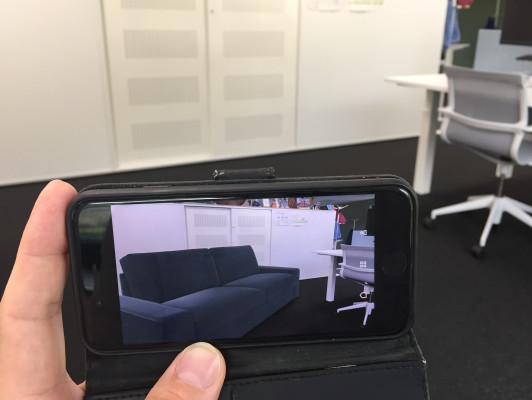

Below are a few examples of AR/MR in action. The first is the Ikea Place app running on an iPhone. It shows a virtual couch in a real-life office. This type of handheld AR is becoming more commonplace, with both Apple and Google providing software toolkits for easier development of AR applications, which is especially interesting when the AR world is shared by users. For example, Snapchat recently published a proof-of-concept app that turned a London street into a digital canvas anyone can paint on and view in AR. Several companies are working on a so-called "AR Cloud", which is a 3D virtual twin of the real-world that can serve as the basis for many augmented reality applications.

But handheld AR on mobile phones has its limits. Only a very small display window is available for showing the virtual elements and it ties at least one of the user's hands to the device. One step up in technology are head-worn devices such as the Microsoft HoloLens 2, depicted below.

Such a device can provide a fairly realistic and natural view of virtual objects within the real world to the user wearing the headset, including natural hand interaction for manipulation of the objects. This kind of experience is usually called Mixed Reality (MR). See the pictures below of how a user running a commercial anatomical app (HoloHuman) would see a human skeleton in MR, allowing hand interaction to select and inspect parts of the the model.

VR, AR, MR, XR? Help!

Unfortunately, the terminology used within the XR spectrum is somewhat diffuse, partly because the different types of media and technologies used inherently have overlap.

Historically "Augmented Reality" applied to augmenting the real environment with virtual (computer-generated) objects, e.g. the example of hand-held AR using an iPhone shown above. This in contrast to "Virtual Reality" where the user does not see the real environment, but is fully immersed in a virtual world (the image shown at the top of this article).

But as more uses of VR and AR technology developed over the years the distinction became somewhat fuzzy. For example, is using showing live camera images of the real world inside a VR headset "augmented reality"?

So the term "Mixed Reality" was introduced in 1994 by Milgram and Kishino to describe all environments in which real world and virtual world objects are presented together (the paper is listed under Further Reading below). However, in more recent times the term Mixed Reality has been used in marketing communication to also cover technologies that fully immerse the user in a virtual world (e.g. Microsoft's Windows Mixed Reality platform includes VR devices). So to avoid even more confusion all these different concepts and their uses are captured these days by the relatively new umbrella term eXtended Reality (XR).

But this still leaves the question on how to specify what subtype of XR we actually refer to. Usually this is done along a few axes, such as the amount of realism in the virtual world displayed, or how immersed a user is in the virtual world.

Relevance of XR for the SURF community

XR technology (so all of VR, AR and MR) can play several roles for research and education in

the SURF community, with different applications for each specific subset.

Fully virtual immersive worlds (i.e. VR) can be used for providing environments and situations that would otherwise be difficult, dangerous or even impossible to create. For example, treatment of phobias can be done relatively safely without exposing the person being treated to, say, actual spiders or a cliff edge a hundred meters above ground level. Using 360 degree video in VR can be used to provide realistic training scenarios of different situations, with an option for the student to interact with the virtual world if needed. An example from the SURF community is PleitVRIJ from the University of Groningen, a virtual court room in which law students can develop their plea skills with law students from other universities remotely.

The exact same technology, VR + 360 video, can also be used to provide an interactive setting for parent-child intervention therapy (PCIT). Here, parents can practice handling challenging situations in communicating with their child by experiencing it first through a VR environment and respond to it by making choices. This training can be performed by parents at home, using only their smartphone and a VR viewer.

Partially virtual environments (i.e. AR and MR) can be used to create insightful learning materials using handled AR on a mobile phone. Showing a 3D scene that the user can navigate around as if it is really in front of them can enhance spatial understanding in a relatively low-cost and playful way. More complex cases could involve the combination of the real world with overlayed virtual objects and annotations, for example to instruct on the workings of complex lab equipment, or to show the inner parts of otherwise opaque machines. Other examples are augmenting real historical artifacts in a student's hands with overlayed information, as in the work on Augmented Blended Learning by the 4D Research Lab from the University of Amsterdam. The application AugMedicine - Transplant Cases, developed by the Center of Innovation at the Leiden University, aims to support students' understanding of spatial relations between 2D CT scans and 3D anatomical models.

Technology versus use case

The above is just a sketch of the possibilities of the technology, with many more exciting use cases to be discovered and realized. This can be approached from either the technological side in terms of its possibilities and limits, or from the side of the use case being considered and what it demands of the technology.

In terms of technology there is a spectrum of how much a user is closed off from the real world, both in visual perception as well as the possibility of still interacting with the real world. For example, although the well-known Pokemon Go game provided players with virtual avatars to locate through their mobile phone screens those players were still fully interacting with the real world (to the annoyance of many not playing the game). Here, the overlay of simple virtual objects on top of a view of the real world was the main use of the mobile phone and was a good fit judging by its popularity. In contrast using a mobile phone in a Google Cardboard-style viewer (a cardboard box with lenses in which you slide your phone to use VR) leaves much to be desired for the immersiveness of the experience in terms of viewing comfort and quality, compared to dedicated VR headsets. So a mobile phone is of limited value in that respect, even though the same underlying technology is used as for Pokemon Go.

Starting from the goals of a use case and how XR technology can help in meeting those goals provides other insights. For example, the PleitVRIJ project mentioned above had the goal to provide training for law students in a realistic courtroom setting that they would otherwise not have access to. The use of a VR headset and 360 video allowed a student to be placed in a realistic virtual representation of an actual court room, while still interacting with the real world through voice-only conferencing. The latter part was crucial in the experience, as a visual-only view of the court room would not provide much benefit.

Challenges

As it is still relatively early days in the development and application of XR technology there are a number of challenges in using and applying the technology. In discussions with contacts at SURF member institutes a number of recurring issues were indicated.

Expertise and sharing

Although expertise with XR usage and development is present at different institutes, it is quite scattered and there is no good central overview of who knows and produces what. Cooperation could also be improved, including sharing of applications, but certainly not limited to only that aspect.

Development effort

Development of XR applications takes a lot of effort and resources. Reuse of existing code and applications, or even learning from each other's implementations, would be a benefit to many. But this raises issues in the realm of intellectual property rights and commercial goals.

Hardware-related issues

The technology, mostly headsets for VR and MR , is expensive and usually requires a substantial investment. This is especially true to, say, facilitate large groups of students. There are also challenges in maintaining the hardware and its installed software.

In the current COVID-19 times a specific issue is that headsets are usually kept in a lab setting, but those are mostly off-limits now. Distributing headsets amongst students at home for the time being is an option, of course. But even when using headsets in a lab setting is possible sharing headsets between multiple users can become an issue due to hygiene restrictions.

Privacy and ethics

There are plenty of issues relating to privacy and ethics in the use of XR, especially in the context of education and research. For example, the recent announcement by Facebook that a Facebook account is required for new Oculus VR headsets and their users has caused quite some concern in academic circles. In general, data on XR usage and its users can be sensitive, more so than just location-tracking such as based on GPS, due to access to data on the user's actual focus (head posture and eye gaze), but also biometrics.

With the expectation that even more sensors will be added to future devices, coupled with cloud-based for semantic understanding, the data gathered will only become more sensitive. And most XR technologies use some form of camera-based tracking, where issues of what gets filmed, stored and extracted from those images becomes relevant.

On the ethical side XR experiences can, especially when using immersive VR, upset or even traumatize users, due to the enhanced realism of the environments and feeling of being locked into the experience.

As a result a focus on "Responsible XR" is emerging.

Project members and activities

SURF together with the Centre for Innovation at Leiden University (Donna Schipper), University of Amsterdam (Robert Belleman) and Delft University (Arno Freeke) is exploring a number of these challenges in the SURF eXploRe project, with the goal of having a starting point together with the SURF community to move forward on use of XR in research and education. More activities are expected to follow the current project in 2021 based on the priorities identified in the SURF 2Jarenplan 2021-2022 (Dutch only).

A first issue tackled is to provide an overview and guidelines to those within the SURF community that want to familiarize themselves with XR, or even want to experiment with XR without necessarily having the required technical knowledge. For this a "Getting started with XR" toolkit is being developed within the project that summarizes the basics and provides practical guidance.

A second focus is the use of 360 video in VR, as this is a relatively straightforward form of XR that has many interesting uses in training and education. In short (and much too simplified) an off-the-shelf 360 video camera is needed to record the necessary footage, the videos are combined into a story line with decision points that require input from the user and a VR headset is then used for displaying this story line and getting the input. An existing 360 video framework from the University of Amsterdam is being extended to become more powerful with the goal of making it freely available within the Dutch research and education community.

As mentioned in the introduction VR as a technology is fairly well-known, while AR and especially MR are behind in exposure. So to give more insight into the possibilities of Mixed Reality a separate blog posts on the Microsoft HoloLens 2 is being prepared. This will give a more detailed overview of the device, it's possibilities and limits in creating MR experiences.

Lastly, the use of "Social VR" is further explored, which is especially relevant in the current situation with respect to COVID-19. The use of VR technology can provide more immersive social experiences and settings than plain video-conferencing, but it comes with its own challenges (for example the low availability of VR equipment).

Also, a SURF podcast on the subject of XR is being prepared and should be published in the coming months.

The project page at https://www.surf.nl/en/project-exploring-vr-ar-and-mr is the central place to gather project updates and output in the coming period. It also provides contact details.

Related activities

An activity not part of the project, but very much related, is the XR ERA initiative started by the the Centre for Innovation at Leiden University. It is a community-driven platform to promote the collaboration on XR for education and research in academia. The community was started recently and is slowly growing, but more members are welcome!

For SURF community members that have novel ideas on how to apply XR to research and education the Stimuleringsregeling Open en online onderwijs funding call could provide possibilities to realize them. The call's two themes are Online Education and Open Learning Materials and more information on the call and its specifics can be found through the link above (Dutch only). The deadline for entering a proposal is December 15th, 2020.

Finally, a new edition of the VARR Out event (on Virtual and Augmented Reality for Research) is planned for the beginning of 2021, with a concrete date to be announced.

Further reading

Here are some references that contain more detailed treatments of some of the topics included above.

The XR4ALL Landscape Report (November 2019) provides a very detailed overview of the potential of XR technology and its applications, including backgrounds on relevant business trends in the use of XR. It's available through http://xr4all.eu/resources/.

Responsible VR - Protect consumers in virtual reality (March 2020) by Dhoya Snijders et al. from the Rathenau Institute describes many of the privacy and ethical issues of use of VR and includes policy suggestions. It can be found on https://www.rathenau.nl/en/digital-society/responsible-vr.

Nep Echt - Verrijk de wereld met augmented reality (October 2020) also by Dhoya Snijders et al. from the Rathenau Institute investigates the potential impact of AR in how we see and treat "reality" when it is augmented with all kinds of virtual content. See https://www.rathenau.nl/nl/digitale-samenleving/nep-echt

What is Mixed Reality? (August 2020) by Microsoft Cooperation provides a high-level overview of the Microsoft definition of MR and VR.

A Taxonomy of Mixed Reality Visual Displays(December 1994) by Paul Milgram and Fumio Kishino is a much older academic classification of relevant display technologies and terminology.

0 Praat mee